5 minute read

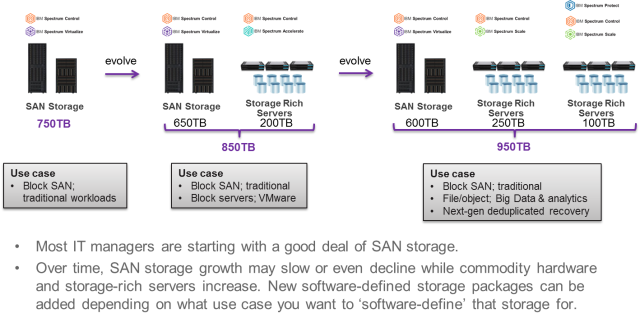

Cloud rocks! In a lot of ways, it has become a philosophy in organizations everywhere. Forrester has observed that the flexibility of its economics is too great for this model to fail. But they are also quick to note that “leaders can operate under a cloud philosophy without ever using public cloud, via cloud-like pricing for physical assets.” On the same point, IDC suggests that 60% of organizations will be using a consumption-based OPEX model for acquiring on-premises infrastructure in the next several years. One reason is that it reduces risk. IT managers in the datacenter can provide cloud-like flexibility with capacity always at the ready – without the risk or cost of paying for something that’s not being used. And cloud-like pricing prevents service providers from carrying the cost of additional infrastructure before they have sold that capacity to their customers.

In this post, I’m exploring cloud-like pricing for on-premises storage from IBM. It’s a straightforward approach that works like IT managers would intuitively expect it to, removing the mystery often encountered in competitive schemes, and providing ongoing predictability in costs.

For IT managers and purchasing folks, the basic needs are really quite simple.

- Storage consumption goes up – and comes down. I only want to pay for what I’m using, nothing more.

- When storage capacity consumption bursts up, I need the capacity to be there and available.

- Storage technology evolves. When it’s time for new infrastructure, I don’t want overlapping costs, and I don’t want application disruption while my data moves.

It’s a simple set of needs, but for most vendors it has been much easier said than done. Some ship over-provisioned systems with relatively small ‘buffer capacity’ or ‘reserve capacity’ and a bill that goes up as the reserve is consumed. So far so good, but as the buffer is used up, the vendor has to install more capacity. There are two issues with this, one is the the bill doesn’t go back down when the added reserve is emptied again. Nice for them isn’t it! The other issue is most IT managers want to avoid the periodic capacity upgrades that could result in workload disruptions. Some vendors offer to replace your storage controller as technology evolves, but they don’t replace the actual flash drives that are holding your data. What good is that – technology evolves there too doesn’t it? Or maybe you can bring in a complete replacement system, but the process of moving data from the old system to the new system is disruptive to your applications. It doesn’t have to be that way.

Meet IBM cloud pricing for on-premises storage. It’s simple.

Step 1: Do you have a rough forecast of how much storage capacity you might need over the next three, four, five years? Most IT managers can get in the ballpark. IBM and its Business Partners can help refine your thinking into a reasonably educated guess. Whatever number you jointly come up with, IBM is prepared to install the full amount of capacity on your premises. Remember, nobody has paid for anything yet, so maybe you want to edge your estimate up a little just to remove some risk or provide for unexpected bursts.

Step 2: With your educated guess established, take whatever the total capacity is and count some small part of it. For illustration purposes let’s say 35% plus or minus (your projected growth rate may edge that number up or down a bit). This amount should represent the part you are absolutely certain you are going to utilize. Often times this is the amount of capacity you are already using – no ambiguity there. The data already exists and as soon as your new IBM storage is installed, you’ll already have that percentage of the capacity filled up. This percentage becomes your baseline.

Step 3: How do you want to pay for your baseline? You get to choose. You can rent it per TB/month with as little as a 12-month commitment and cancel with 60 days notice any time after that. Or you can lease it per TB/month for 3-5 years. Or you could choose to just purchase it all up front. It’s your call.

Step 4: Well, there isn’t really a step 4. You have enough storage installed to meet the demand you’ve forecasted over the next several years, but you are only using and paying for a small part (in our example about 35%). There’s nothing more for you to do except focus on managing your business. IBM Storage Insights, which I discussed in my post Software for Simplifying Storage Operations, is included with your cloud pricing to make life easier for your IT manager. It’s also there to meter your actual storage capacity usage. Your daily usage above the baseline is automatically averaged across the month and you receive a quarterly bill. You are only billed for what you are using, and importantly, if your usage comes back down, so does your bill. It’s that simple.

Fast forward three years

Actually, fast forward about two and a half years. It’s time to evaluate how new technology enhancements might benefit your organization and decide how you want to proceed at the end of your three-year term. You can choose to upgrade your complete system – controller AND storage – or keep your current system, or simply return the old system and walk away. If you choose to upgrade, you’ll feel like a kid in a candy shop.

- First, you get to choose what size system to upgrade to. Configure a bigger, faster system if you need to. Or a smaller system if you like. Or just keep capacity the same only with current technology. If you keep things the same, IBM will guarantee the same or lower monthly price.

- Next, IBM will provide the new storage a full 90 days prior to the term running out on your current storage. Using the power of the IBM Spectrum Virtualize software foundation, data can be transparently migrated from the old system to the new one. And even though the new system is already installed, payments start only after the 90-day migration window giving you continuity in your costs. Out with the old, in with the new, and no overlapping costs.

And if that’s not enough…

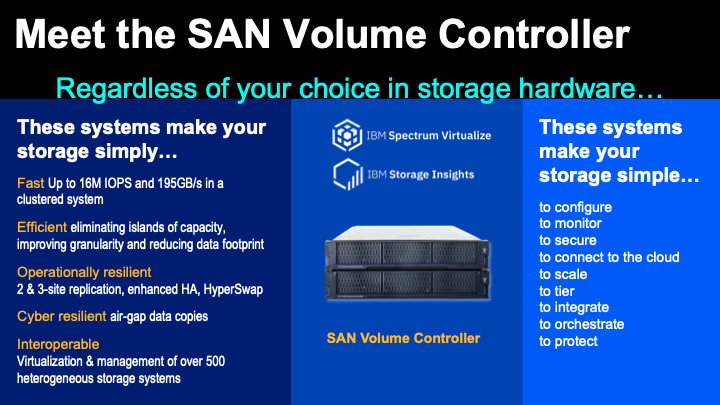

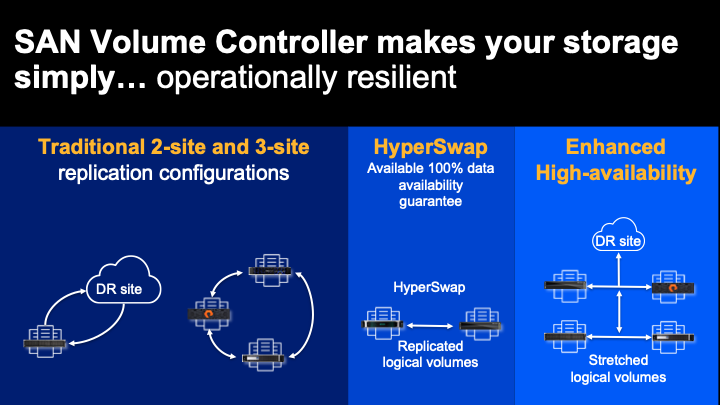

In my post on Elegant Flexibility – IBM Spectrum Virtualize, I included a section on These systems make your storage simply operationally resilient. In a world with increasing demands on data availability, IBM offers Hyperswap. When properly deployed by IBM Lab Services, IBM will guarantee 100% data availability. That’s not five-9’s (99.999%) or six-9’s (99.9999%), it’s 100% availability. The cost challenge is that Hyperswap storage configurations require twice as many storage systems. To help make that more affordable, with the cloud pricing for on-premises storage that we’ve been discussing, you can get the 2x systems needed for high availability and only pay 1.2x the baseline costs.

Share this post with your purchasing folks.

What do you think? Are you ready to shift some financial risk to your supplier? IBM is ready to be your partner!